AI Trajectories Tool

The AI Trajectories Tool is an interactive teaching resource that explores six hypothetical models linking decisions about AI development and use (“cause”) to real-world outcomes (“effect”). It’s designed to spark structured reflection on the pathways AI might take, and the choices — at personal, organizational, institutional, and societal levels — that shape them.

It was developed in part as a teaching resource for students and faculty at Arizona State University to explore responsible and beneficial AI through the lens of cause and effect using a number of different models.

→ Open the tool: raitool.org

What the tool does

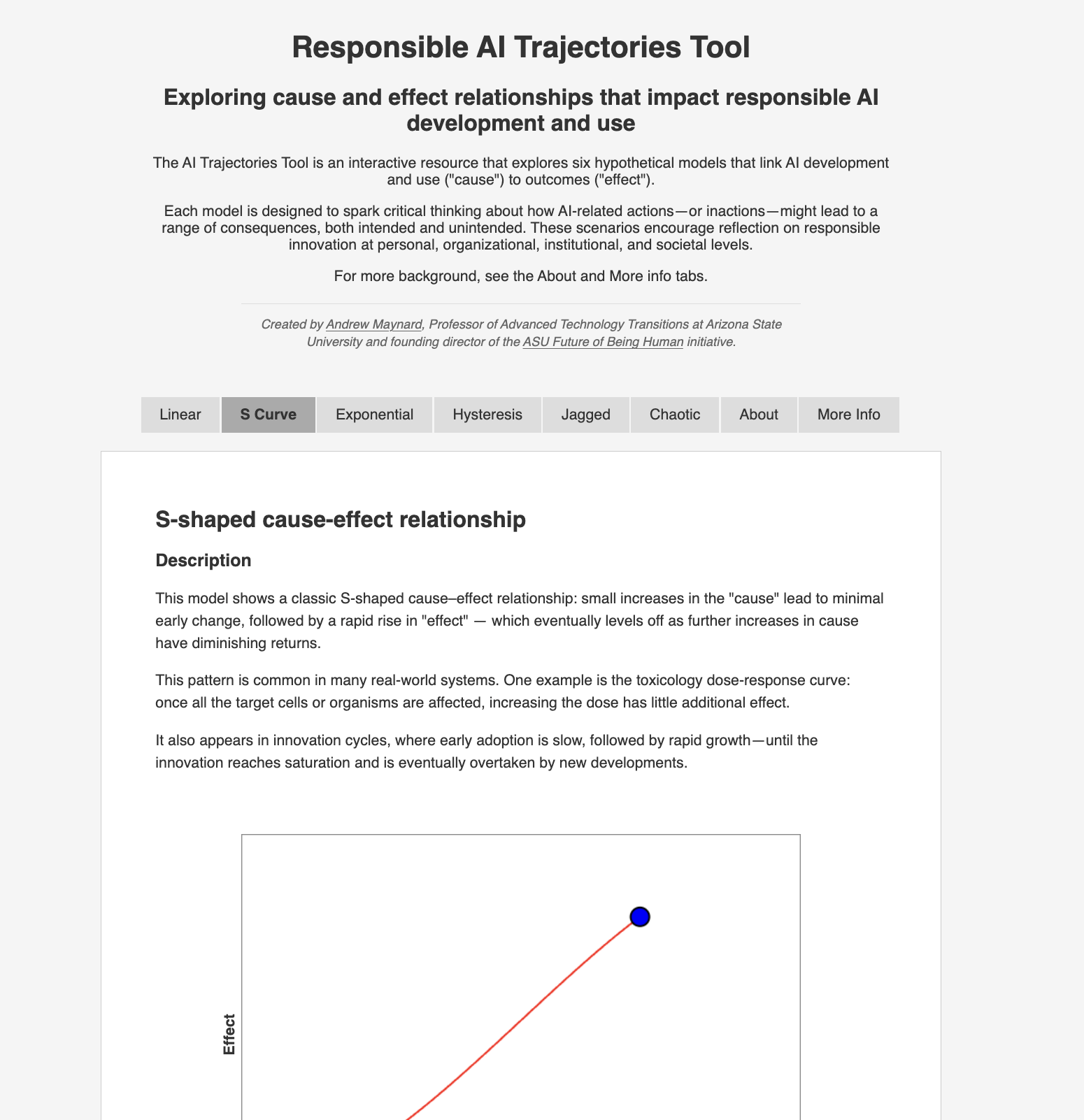

Each of the six models in the tool presents a different cause-and-effect relationship — some intuitive, some counterintuitive — that reflects how AI development and deployment translate into consequences. Users move through the models interactively, adjusting variables and watching trajectories shift in response.

The models are not predictions. Rather, they are scaffolds for thinking: frames that help users notice and think through assumptions they have about how AI use leads to effects in the world, and consider what those assumptions might be missing; and frames for thinking differently about responsible and beneficial AI.

Why cause and effect?

A lot of conversations around responsible AI focuse on principles and frameworks in the abstract. But the operational question — how do specific decisions actually lead to specific consequences? — often gets less attention. This is where a mental model based on cause and effect allows the idea of “responsibility” to move from being aspirational to actionable.

This is where the AI Trajectories Tool comes in. It’s an intuitive way to stress-test intuitions, surface trade-offs, and provide students, teams, and decision-makers, a shared vocabulary for talking about how AI gets from “what we choose to do” to “what actually happens.”

Using it in teaching

The tool is openly available under a Creative Commons license (CC BY-NC-SA 4.0), and is already being used in undergraduate teaching at ASU. It works well as:

- A pre-reading/doing exercise before class discussions on responsible AI, technology assessment, or innovation governance

- A scaffold for group reflection on a specific case or scenario

- A jumping-off point for students designing their own approaches to responsible innovation

- A shared reference in courses spanning ethics, science and technology studies, public policy, and innovation studies

Educators are welcome to incorporate the tool into their own teaching materials with attribution.

Background

The AI Trajectories Tool was developed draws on my broader work on risk and Risk Innovation and on understanding the non-linear pathways through which emerging technologies translate into real-world consequences.

For related writing on responsible AI and advanced technology transitions, see the Future of Being Human Substack and the resources here on AI and Being Human.

More Information

The AI Trajectories Tool at raitool.org includes a More Info tab that lists additional context, resources and readings.

Suggested citation

Maynard, A. (2025). AI Trajectories Tool. Future of Being Human, Arizona State University. raitool.org